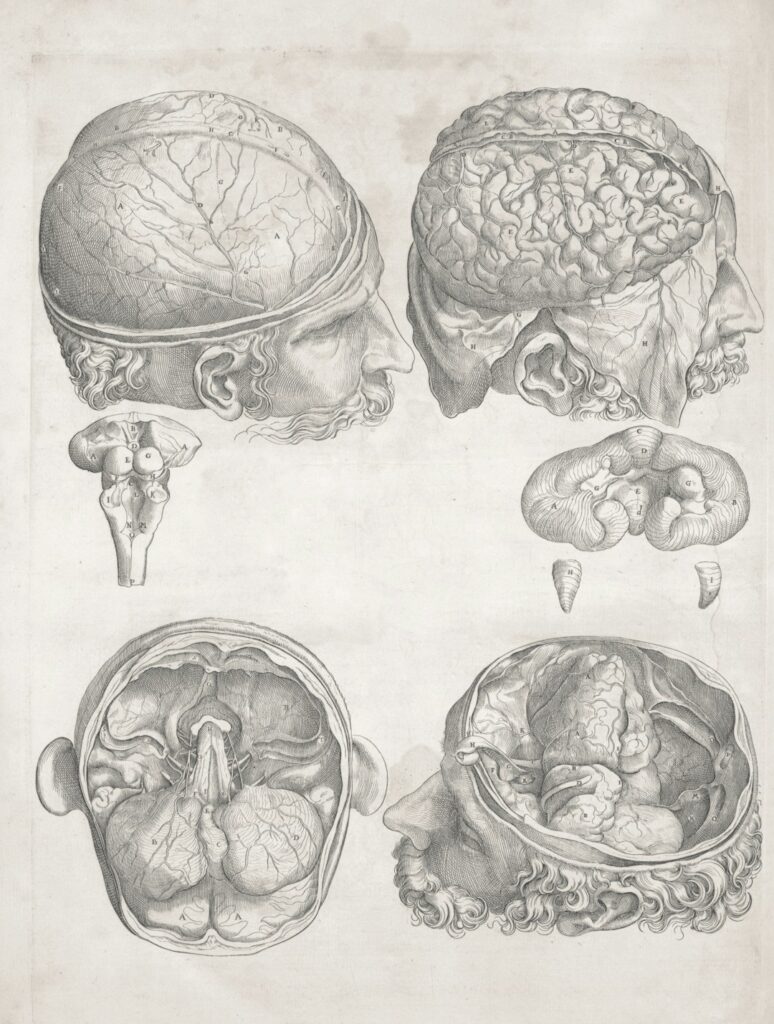

How our brain is put together has been known for some time, but how the brain works remains the final frontier in science. Patom theory changes that. Photo by The New York Public Library on Unsplash

Today’s article continues with the analysis of how our brain works.

It continues the last article showing what our brain does. Its purpose is to persuade you that the cognitive science behind Patom theory (PT) is the best and only brain model yet. It also explains PT to understand a model of human language in an upcoming article.

How brains work

There are three sections in this article to make the case:

- What we know our brain does (cognitive science) PART 1

- A thought experiment to demonstrate additional brain capability PART 1

- Inferring the pattern-atom model (Patom) from this evidence. PT combines what we know from the first two points. PART 2 (THIS ARTICLE)

3. Pattern-Atoms (The Patom Brain Model)

Given what we see happens to our brain from damage and what monitoring shows, a thought experiment further develops the scientific model. In other words, the scientific process: observation first and then model development. Then iterate.

Let’s do the analysis of our observations to create PT in sequence:

- What we need to explain

- A model for Regions

- A model for Sensors

- Successful Evolution of Sensors and Recognition

- Explaining Plasticity of Regions

- Why Computers are Unlike Brains

- Patom Theory Summary – All a brain does is:

“Store, match and use hierarchical, bidirectional linked sets of patterns made of sets and lists”

A. What do we need to explain?

What’s a pattern?

Brains start with sensor inputs and recognize it in regions. Brains move the body with muscle contractions.

At a high level, the brain enables prey to be captured and predators to be avoided. Survival is the ultimate measure of success where success is easy to measure: enabling survival to reproduction.

Neurons are individual signaling devices. They activate and then stop.

When your eyes are exposed to an image of, say, your mother, your visual system recognizes something inside the activations. The recognition in the visual area that comes from your mother’s image is called a pattern in this theory. This is unlikely to be a mathematics problem because of the assumption that each sense is handled the same way. What is the mathematics of patterns that is common between vision and hearing? Perhaps your brain regions store a number of different patterns to recognize your mother. The requirement is for the pattern to be sufficient to recognize her again, not to be a visual facsimile of her.

Similarly, when she speaks, the recognition of her voice’s specifics that differ from other voices is a pattern. These are not just surface patterns.

The building blocks will be shared between similar patterns, which leads to the concept of icons. Icons are a term that captures the similarity between different patterns. Rhymes share similarity in sound, such as shared phonemes, in some sequence. Harmonies share combinations of sounds such as chords and tonalities.

Patterns combine as well, but not with the computer-way by encoding it. Linking from one pattern to form combinations is better to reflect the observations of brain region function. For example, a region that connects to both your visual and auditory regions represents your mother as a multisensory object. Its recognition connects back to vision and auditory regions that recognize her details in that modality. This multisensory region stores patterns as well, in this case made up from those other patterns.

Pattern combinations

Having patterns matching sensory inputs, moving muscles for motor control, and consolidating multisensory patterns are the building blocks of a high-level brain like ours. It is a pattern matching system with the Patom hypothesis that aligns with our observations.

There is a lot of information about the level of recognition that shows matched patterns are sufficient to enable replication in AI.

The same type of material that recognizes sensory input also generates motor control – our cerebrum’s cortex. Explaining a common function dealing with simple sensory patterns, complex multisensory patterns, patterns that generate complex motion like in gymnastics and car driving is necessary for cognitive science.

If we scan the brain of a gymnast and ask them to think about a routine, we see regions activated, like in their cerebellum, that help coordinate motion in us all. By having these regions activated, it suggests that there are stored patterns that enable complex functions.

Patterns are central to a model that explains it all and that avoids the pitfalls of computing.

Exploring regions further

The high-level question to discuss is what those regions are doing. How do we explain what a brain is doing that is consistent with what we observe?

Again, our senses send streams of activations into our brain. Our other brain regions receive these signals and generate activations in response and send them to a range of other brain regions based on what the region connects to. In Patom theory, the response is based on matched patterns. A key feature of brain function relates to region loss:

We have seen that the loss of a region removes that capability and the recall of it, as well!

If we lose the ability to recall stored memories as a result of region loss, that supports the concept that each region stores its own patterns and doesn’t encode and send it.

In the human cortex, neurons need a period of recovery after firing. They can fire up to 1000 times a second, and each of these signals can be considered a pattern or a part of one being matched which is then input for additional patterns of combinations. My work is theoretical and that’s where I leave it.

Motor control – switched direction

The reverse is possible too, as seen in an animal’s motor control. On the output side, the signals can enable muscles to activate.

Notice that the signal of a transducer neuron, regardless of whether it is activated by the amount of red light or the amount of low frequency sound, the signal is one or more neuron’s activations. Our brain only ever receives activations, not the source like with light or sound.

There is a region for each sense: vision, hearing, balance and so on. These regions are located in different locations in different people. The loss of a region not only removes the function, but also the ability to recall that function—in color loss, for example, an artist not only cannot see color, but also can no longer imagine color.

The working hypothesis in PT is that each region stores patterns found in the sense. By definition, the patterns must be in the input source, and for senses are therefore sensory patterns. Patterns are either snapshots – sets of active elements in the input – or sequences – lists of patterns in the input. Patterns need to be sufficient to match again, not replications of the originals.

Sequences allow sets of different things to represent a single thing. A box that is moving away can have a number of different patterns matching that single object. The system is fundamentally combinatorial as objects at one level combine to comprise objects at another level.

‘Your mother’ is a multisensory pattern (its meaning) and an auditory pattern. The auditory pattern is made of words. The multisensory pattern is what it refers to – a specific individual. Her face can be recognized as a visual pattern, but one that is uniquely connected to the multisensory one.

Regions signal when a pattern is matched

The region signals when a pattern is matched. This signal travels forwards (bottom-up matching) and backwards (top-down matching) to connected regions. The benefit of this matching sequence enables context to play a role in ensuring the match is consistent with the current situation while also ensuring the actual situation is consistent.

Unlike today’s generative AI that seeks to provide responses based on token sequences, our brain stores the context of who and what is happening now in addition to low-level sensory information to validate our understanding.

Patterns are stored as the set of patterns connected to it

‘Your mother,’ the thing referred to, is a single pattern in a brain. There are no copies. Relationships connect directly to this pattern using standard associations – ‘is’, ‘has’, ‘does’. We will come back to this when language function is discussed. Relations can be added endlessly, but of these kinds, not others.

By having only one pattern per brain, they are atomic in that each pattern is stored only once. Patterns combine in hierarchies, giving them massive generalization. For example, a dog has four paws, two eyes, one head and one tail. By adding that association to a new entity, it inherits all defaults of the category. That’s a very quick way to learn.

This model enables plasticity, since it relies on the source finding the pattern that requires connectivity, but the region itself does the work of storing and matching patterns.

Patterns are stored where matched, and accessed with reverse links

‘Your mother,’ the thing referred to, is a high-level pattern, but it isn’t an encoded pattern. There is no visual face stored in it. To access vision, the reverse links to visual memory are initiated. Those links access the ideas, as needed, and are sufficient from impressions to match and therefore identify the multisensory pattern as well.

Patterns, not processes (no programs to create!)

Patterns store, match and use inputs received in a consistent way. This removes the need for algorithms to deal with vision differently to auditory patterns. Any memory connected can therefore be used for any region. This is a significant simplification over the computational model.

Equally plasticity is explained as the training of a region on patterns below it and those above it in the hierarchy. There is no need to create programs as a part of brain region allocation.

Encoded representations are not required, since they are recognized as combinations from the inputs below. In high-level objects, they are the cumulative patterns across the range of senses.

Let’s look at an example.

In cars, steering is a combination of sensory patterns with motor integrated. Moving the steering wheel left or right is connected to visual changes such as the angles of the median strip against the visual field. The balance sensors in our brain feel the forces of change in a direction. A move of the wheel backwards reflects in another visual change. The experience of the changes is known as ‘learning to drive’ and the more experience received the more accurate our driving becomes.

There are no limitations on what patterns are possible, provided they are found in sensory input. This enables a large range of possible future skills.

This is a starting point for Patom Theory. Let’s look into the details of regions and plasticity to explore the radical difference between it and the model of computation.

Proximity of Regions

It is intriguing to see that some regions for certain patterns are close together, or at least directly connected by neurons that project between regions. Our brain has regions that seem to be dedicated to connecting regions together, such as our thalamus. This makes sense to enable evolution to connect critical regions without needing neurons to somehow work their way to targets directly.

The most obvious regions that are in proximity are the primary motor cortex (M1) on our frontal lobe and the primary somatosensory cortex (S1) on our parietal lobe. They are physically right next to each other, but regions communicate via connected neurons. Without connections such proximity is meaningless, but as luck would have it, there are connections.

The generation of speech (Broca’s region) in the frontal lobe and its comprehension (Wernicke’s region) in the temporal lobe are separated by some distance. Fortunately, there are a bundle of connections conveniently providing connectivity, the arcuate fasciculus (AF). Perfect.

There are many brain regions that are needed in theory in order to consolidate senses, and in our anatomy such regions can be seen.

B. Model for Regions

We saw how brain function comes from regions in our cortex. These regions look like the same material and yet perform radically different functions. They are also located in different places in the cortex in different people. Broca’s region is typically located in the left hemisphere only, but sometimes in the right hemisphere, and there are other variations especially following brain damage.

How can this work? Let’s compare the traditional processing model with Patom theory.

Hypothesis 1: A digital computer

A computer starts with a program. A typical function is for the program to monitors for I/O, input from a keyboard, touch screen or microphone, for example. Input is delivered as bits, encoded sequences whose code is defined in advance. Examples of encoded characters are defined by ASCII and Unicode, for example.

Do our eyes encode the world like this? There is a fovea (central high-definition area) and saccades (rapid movements of your eye). There are around 125 million photoreceptive cells/neurons, rods and cones, that convert light into electrical signals. The anatomy itself means vision isn’t like receiving the pixels in a high-definition computer screen.

Do our ears encode audio sound like a computer? Computer audio also follows standards that cover sample rate (audio signal sampling rate per second, today from 44.1KHz), bit depth defining the number of bits in each sample (today, from 16-bit that covers ~65,000 levels) and format (uncompressed and compressed formats). In our ear, there are around 16,000 cilia (hairs) in the cochlea that convert sound vibrations into electrical signals. Given variations in ears, the signals sent to the brain will have variations and yet our ears recognize the phonemes in a language as well as a wide range of noises from Mozart to a dog’s bark, to the starting sound of a car engine.

Here’s the puzzle. If the neurons in our eyes transmit signals to the cortex, and the neurons in our ears transmit signals to the cortex and the cortical regions look the same, how does it work?

With a computer, encoded receipt of information would be processed, often by storing the input in a database. Sound would store sound-formatted data in its database and vision would store visual data in its different database. Programs, written by humans, would control each database.

What does the encoding of 125 million visual sensors look like and why does its cortex seem to perform the same function as encoded auditory hair stimulus from our ear? One region recognizes vision and another identical region recognizes sound. How does the same algorithm recognize different encodings?

So far, there isn’t a defined computer-like model to explain these 2 regions, and of course any theory also needs to explain all the other sensors in our body for balance, taste and the others. The region theory must also explain muscle control in our body with dedicated cortex. It must also explain other sensor collections from around our body. A good question is why the brain’s voluntary motor control (the motor strip) is next to the brain’s somatosensory cortex. It’s because they are functionally interconnected. Movement and senses work together to ensure our body is positioned correctly during movement by sensing positions.

Loss of regions also loses the imagining of the lost function.

This was demonstrated in an artist who lost the regions for visual color. Their ideas became colorless as well as their impressions. If patterns were encoded, the loss of a region wouldn’t lose part of the pattern because it would already be represented in the encoding.

On this basis I reject the computer model with encoding and processing to handle our visual and auditory senses and their recognition in the brain’s cortex. The task of encoding and programming these different functions has never been done with human-like accuracy, in any case. There must be another way.

Hypothesis 2: Something not computational? What is it?

Let’s develop another model and call it Patom Theory. The word ‘Patom’ comes from the words pattern plus atom. Pattern-atoms represent the building blocks of the theory, and so it is called Patom theory for short, or just PT.

We start with sensors and regions. The regions are very different in function as vision is different to hearing, but they look like they do the same thing. Encoding on the scale of human sensors exceeds the kinds of data densities we use in televisions and audio equipment today, with vision including a hundred million of pixels compared to a very high-definition monitor at 4K that uses only 8.3 million.

If we lose an eye, we lose part of our sight. Equally we experience blindness if the cortex the eye connects to is lost, even if the eye itself is undamaged.

If the storage of patterns is used where matched, all that is being sent is the set of active patterns as a set of matched signals. That removes encoding completely and retains access to the patterns matched by accessing the originals with reverse links. Bidirectional patterns eliminate encoding! That is, recognition comes from original patterns and recall comes from the reverse links on the set matched.

What if sensors send to the cortex, and the region it connects to only stores recognizable patterns of what it receives if new, and matches it again when received again to downstream regions? Each subsequent match is identical to the first match since it uses the same signal.

The idea that our brain only stores enough to recognize an object again comes from psychology as seen with the ‘recall experiment’ where someone draws a common object like a one-dollar bill from memory. The result is a highly impoverished sketch compared with the real object, but one suitable for accurate recognition.

C. Model for Sensors

Our brain is said to be integrated with our sensors. The sensors are just specialized neurons that activate when their transducer matches. In our eyes we have transducers that convert light and color into signals. The signals are neurons that are activated based on the receipt of the right kind of input—add red, green or blue inputs and your brain receives signals of the detection of color at the site of the sensors.

There is no fundamental difference between taste, vision, heat or auditory signals! All just produce neuron activations. There are a set of neurons that activate based on input, but inside our brain, the signals look similar. On or off. Firing or inactive. Repeating or not.

How does our brain deal with the receipt of signals from unknown sources to recognize the world?

The approach is always the same, store and signal if new, match and signal if old. Store, match and use. The input layer stores patterns and then signals. The next layer receives the signal. The brain anatomy signals back and forward when new signals are matched to facilitate both bottom-up and top-down matching.

Let’s use the same analysis as for regions. Today’s roboticists use the computational model, or the statistical computational model with machine learning, to match previously received data. This is always bottom-up matching. Top-down was only during training. Brains are more general, incorporating context to match top-down patterns in addition to bottom-up only recognition. Always learning, always on-the-fly, in context to ensure accuracy.

Hypothesis 1: A digital computer

The transducers interact with their design and can encode the signals sent into the brain with details of its type. Visual signals are encoded with visual details while auditory signals are encoded with auditory details. When the signals arrive in the connected region of the cortex, that region unpacks the encoded signal with a program and then re-encodes the signal for use around the brain.

The problem with this model is that it requires a lot of data manipulation in order to emulate a computer. And we only propose encoding in order to emulate the computer metaphor.

It appears far less likely that the computational model is applied to a problem that evolved over time across different modalities. Today’s modern paradigm by introducing a computer appears unnecessarily complex, wasteful and creates unsolvable problems like how to encode different senses for the handling by similar regions.

Hypothesis 2: Something not a computer, but what else is there?

The starting point for sensors is the evolution of the transducers! The conversion of sound to an auditory signal is amazing, as are the other sensors that make up senses. The output is sent to the brain that, in turn, hits a cortical region.

What does the region that accepts the signal do? Its job can be simply to find patterns. Has this been seen before? If so, send a new signal further into the brain to do something with it. Why not assign the region to process the signals with some kind of decoding process? The different senses would require different regions to perform different functions, but all regions look the same.

It is easier to assume that the regions of the cortex do roughly the same thing, rather than some kind of specific program for each region based on a knowledge of the kind of signal received.

In the real world, some bats evolved a sonar-like system to navigate in pitch-black caves. How would a region develop for that if not simple? How, too, did some whales develop that capability for diving in the deep black ocean?

The plasticity of the brain then follows, since if all regions do the same thing there are no additional steps necessary to store, match and use received patterns.

Regions emerge based on their inputs and connections from anatomical endowment that is sufficient for downstream regions to be created or found.

Is there a complex system in place to match patterns this way? Of course, but the transducers are marvels of complexity forming key pieces between the problems of what sensory inputs to receive and how to best convert it into something useful for a brain!

Evolution reduces the need to repeatedly create a vision system, for example, as earlier animals can pass on their capability to their progeny as a standard feature of evolution.

D. Successful Evolution for Sensors and Recognition

Does evolution create the same results more than once? Sure. Look at sharks and dolphins as an example of fish and mammals evolving to look similar. There are differences, like fish moving their tails from side to side, while dolphins move theirs up and down, but the similarities in overall model are clear.

But there are a couple of important changes in the case of brains. One challenge is the emergence of transducers. Scent receptors as seen in ancient fish enabled olfactory senses. And the system that supports it can be specifically dedicated to olfaction.

The same applies to vision, and hearing, and motion control and other senses.

What does a sense do? It recognizes known objects and actions. Recognizing a shark with vision is useful (like a photographic snapshot) and whether it is moving towards us or away from us is also useful (the change in features of the shark over time, like a sequence of photographic frames). The same applies to our sense of smell. And hearing.

The concept of this kind of sensory recognition is called pattern-matching. Snapshots are patterns of input at a point in time, while sequences of patterns apply consecutively as in motion or increases in intensity (light getting brighter, aroma getting weaker, etc.).

Evolution of the brain shows predictable changes from fish, to amphibians, to reptiles, to birds, to mammals and to humans. The human brain, itself, can be seen as an earlier brain (stem and cerebellum) plus instinctive behaviors (limbic system) plus mammalian extensions in the cerebral cortex. The early regions look like standard, useful extensions to the brain, while the cortex is generalized material that performs a wide range of functions with a common, single implementation. What would a single implementation be?

Hypothesis 1: The information gateway

Computer science has patterns for data optimizations. In the internet, there are implementations of gateways to ensure successful transfer of data without loss between nodes in the network. Error correction codes, for example, and routing methods are designed to get encoded data between points without loss. There are layered models to make the movement of data seamless and certain.

Is it possible that the brain does this to move encoded data around? Even if each gateway is different to deal with the variation in data models in use?

Anything is possible, but computer systems are designed by people to handle data. The brain evolved to integrate a wide range of sensory inputs with the requirements of motor control for effective motion.

Hypothesis 2: The pattern storage and use

Another model starts with one based on patterns. The pattern model stores patterns it receives and signals when a known pattern is matched again. In simple animals, the recognition of predators and prey can take place based on any sensory input (olfactory, visual, hearing, …) and actions are complex sequences of muscle contractions to move towards or away, or to be still.

Survival benefit is important for new mutations and functions to ensure the changes are reproduced in subsequent offspring.

If the cortex, a generalized and useful collection of brain regions that extend the capability of earlier senses, develops to store, match and signal (use the match) when patterns are matched, it enables all new and existing senses to be tracked in the cortex. It still requires connectivity from the sensors to the cortex, but evolution can see to that.

To recall a function, something that is probably less important to survival than recognition, a simple way to perform that is to re-access the original patterns. The recognition of the pattern is now a function of a region, and so the re-use of it later avoids a need to ever encode it.

In practice, our cortex has both forward and backward projections from regions enabling a more sophisticated type of pattern clarification.

E. Plasticity

Computational Independence

Computers allow the movement of workload between compatible machines.

In a computer, any memory location can store any encoded data. Provided the encoding type is known, the data can be used without loss. To access data, the memory location of the data is needed and then the data type. This architecture is a useful pillar in computing.

Another requirement comes from the processing function. Computers are built with a selection of instructions and a tracking mechanism for what the program is up to, what its local storage contains and where its data is. These days, an operating system handles the access to low level instructions as do compilers and interpreters, but the principle remains that the instructions requested must be performed or simulated on the machine.

Brain independence

Brains have never been shifted from one person to another. The scale of such a project is immense and so such transfers remain in the science fiction domain.

In theory, our body is tightly coupled to its sensors and muscles. Motion is voluntary under the control of our nervous system, but paralysis from spinal cord damage is irreversible today. Swapping a brain with another body introduces questions about coordinating a different sized body, for example.

Can we just swap out part of our cortex, or some of our sensors?

The challenge to sensors is in its connection to appropriate cortical material that also retains forward connections to appropriate brain regions. Experiments on cats has shown how brain development re-uses unused regions in ways that appear difficult to overcome. But with the concept of hierarchical patterns, the replacement of regions combines previously stored patterns of inputs with known connections as outputs. To recreate a lost region would require either a retraining session to restore each and every lost pattern, or some kind of engineering to replace the region neuron-by-neuron from an existing model.

Your mother’s face

Now we get to the conclusion that is hard to follow without the example of what you can instantly do when asked to imagine “your mother.”

The computer model doesn’t explain brain damage through loss as sending information is hard to imagine and doesn’t retain functions in transmitting regions. It comes from our computational metaphor, not brain research.

Let’s assume that regions accept patterns that come from connected regions and use them in their own, composed patterns. The patterns need to be very precise to connect one pattern to its component patterns, but not others. For example, your mother’s face is only associated with her, not with your father as well, even though facial recognition for both is localized.

Region Model (Generalized)

What can we infer when confronted with a localized auditory region, visual reading region, language comprehension region and language production region?

Seeing something, an impression, is received in your occipital lobe and matched patterns are projected up and validated down the chain of regions to accurately identify the object based both on experience and the context of what is expected.

Recalling it now as an idea comes typically from multisensory object recognition that connects back down the chain of regions to its original sensory sources where the pattern’s idea is stored.

This model suggests that a brain isn’t a tape-recording device, storing a perfect facsimile of the world. Instead, the brain recognizes and stores things we experience through our senses as impressions and recalls faint versions of those as ideas.

As our sense’s neurons are activated, they signal to a brain region they project to. This region in turn signals to connected sensory regions where aspects of the sense like color, motion and objects are recognized as was seen in the visual recognition regions.

We will see that a simple extension of the object pattern can be made with an additional auditory pattern in languages. A word’s sound may be different from the sound an object makes, but the two patterns are connected in some way (the sound of the word connects to the multisensory pattern). By connecting senses together, the target of the meaning of a word can be the entire multisensory pattern for an object and therefore access its range of multisensory patterns with a single pattern.

F. Computers are not Brains (linked senses and motor control)

How does a computer today work?

In short, it uses memory to store data, encoded content used as information and to store a program that controls the operation. A logic unit or ALU follows the program flow that specifies instructions to be applied to the data. This is the perfect architecture to emulate what human computers were doing previously.

How is the information stored? Usually in some database, a persisted form. Database designs ensure the format is retained in the database format as well as the data format.

In the case of sensory input, a number of issues arise such as how to encode images (jpg/gif/…) or videos (mp4/avi/…) or muscle movements (?). Programs can be written to manipulate these other requirements, so let’s keep moving.

To create multisensory storage, someone would design a database and ideally, normalize it for efficiency.

Computer Index?

In order to find something, the system would start with some unique identifier, like a customer number. This number could be an index used to identify the personal details quickly by accessing the index to the particular database table.

Brains access everything (no index?)!

In this case you are given language to ‘imagine your mother.’ To get access to your mother’s name would require additional information, but humans work without that. Finding her face amongst all the experienced memories of faces is equally daunting. How would a million images provide the particular faces that came from your mother.

On this topic, how does seeing your mother immediately recognize her – history, body, voice? Our brain seems to have instant recall to everything. It identifies from a voice, from a face, from an outfit, a hat, …

How would this database be designed and what would the indices be for immediate recall?

It is not plausible that our brain has a computer-like index. A different system is likely.

Patom theory (links remove need for a search index)

How can we store such a vast array of related patterns – visual faces, bodies; auditory voices; olfactory perfumes; and even kinesthetic recognition of clothing – not to mention the need to associate these all to each other, and to our languages?

Firstly, we only talk about a database because that’s what computers today tend to use for applications. Machine learning allows direct access to stored patterns, but typically per modality without integration. Perhaps a face is associated with some sequential characters, but not the range of other attributes our brain can access.

Second, we talk about an index as computers today rely on search to rapidly access sorted material efficiently.

So, without a computer metaphor being applied, how do we access these vast amounts of sensory experience and integrated language without an index? The solution proposed in Patom theory relates back to the model described for sensory experience: objects are connected from sensory recognition to object recognition and back.

The sound “your mother” identifies the object representing your mother (presumably in Wernicke’s area or the related entorhinal cortex or hippocampus – regions not yet studied with the view of PT). The auditory sound links to the brain’s comprehension area that is linked back to each sensory region where sensory patterns are stored. That’s a lot of precise linking in which your mother’s face isn’t confused with your car’s shape or you own face! With PT, the object for your mother is associated with links to the sensory regions that recognize the sensory elements and enabling parallel recognition of many such objects at once through our senses in parallel.

Matching from senses

In PT, matching a part of a pattern matches the whole. Your mother’s face matches your mother in total. This enables language, since extending a multisensory pattern incorporates any naming associated.

Matching from language

Language can match objects by referring to the specific referent, for example, “your mother.” Once access to the multisensory object is found, reverse links to the sensory regions access her multisensory details.

Language can also match objects by referring to sensory icons like “this picture” (of your mother) or other indirect patterns.

G. Patom Theory Summary

Let’s recap now to summarize Patom Theory.

At its core, all a brain needs to do in order to achieve the amazing capabilities we experience is to:

“Store, match and use hierarchical, bidirectional linked sets of patterns made of sets and lists”

One pattern per brain

Having one method to store anything, as patterns, enables our brain to re-use the same material over and over. This gave Patom theory its name from pattern-atoms in which each atom is unique in a brain. There is only one kind of each pattern to enable any pattern to be found uniquely and all its associations in one place.

Our entire neocortex is made of the same material that presumably does the same thing to perform radically different functions from vision, to hearing, to language comprehension to motor control, and even to speech. One model reused everywhere, at least in our neocortex, but this means the processing model from computers must go.

When you know about a person, you start to build a connected set of patterns about them – their looks, touch, smell, voice plus what they said and did, and where they said and did it!

PT takes what we experience and enables future recognition in the most efficient way possible through hierarchy and bidirection. With patterns being atoms, the need for search vanishes as finding a pattern from an association is sufficient to access all related patterns.

To recap: Patterns enable what our brain requires. What happens when you know about a person? Typically, you know what their face looks like. What they have done. What they are good at. Where they have been. As there is only one of each kind of pattern, it is like physics – they are atomic. There is only one of each type. Experiences with your mother only connect to this pattern so there are no gaps in experience with any individual.

Critically:

Pattern-atoms creates a new system unlike a computer because there is no need for search.

Hierarchical

Your brain receives inputs from sensors in senses. These capture details of that sense only. Rather than encoding and sending data around, the cortex stores snapshot and sequential patterns from the sense it connects to. When a pattern is matched, it signals to connecting regions of the matched patterns. And those connecting regions store patterns from the previous regions just as sensory regions store patterns from sensory neurons.

When a pattern is matched, it sends a signal to the next regions and also the previous regions. This is an architecture that aligns with both top-down pattern matching and bottom-up pattern matching. Bottom up recognizes what is received based on what sensory patterns have been received before, while top-down recognizes what was matched based on what is expected in this situation from prior experience.

In our example, your mother is multisensory, but is also an object. Your mother’s face recognition is visual, but the object can be manipulated independently to its sensory patterns. The sensory patterns making up the whole are available as needed using the reverse links that make up the hierarchy from senses (e.g. vision), to sensory details (e.g. color, shape and motion) to whole objects (your mother, connecting back down to other sensory patterns).

What you see connects up the hierarchy to the overall object as your mother’s face connects to the overall pattern for your mother. Similarly, your mother’s name connects to her overall representation that connects back down the hierarchy to her sensory patterns, such as her face.

Bidirectional

In the example of recognition of your mother from her name, your brain accesses the name, links to her multisensory pattern object that is connected to their respective sensory regions.

“Your mother’s face,” the words, identify a particular face associated with “your mother.” Equally the reverse applies: her face identifies your mother, the words, and also all connected ideas of her.

This is bidirectional and we see how the brain uses bidirectional connections everywhere. The hierarchy is bidirectional both in this thought experiment and, in particular, in our neocortex as its typical 6-layer anatomy includes layers for projecting to the next region and back to the previous one.

We will come back to the bidirectional nature of brains in the linguistics topics later in this series. We see endless examples, that PT describes, such as sequential words identifying objects and vice versa and sequential letters identifying words.

Linked Sets (or linksets)

In a computer, we encode data and store it for re-use with an appropriate decoder. This allows us to readily duplicate and send the data anywhere the same way anything is sent.

The brain doesn’t work this way. A piece of a brain is an integrated unit in which its loss cannot be recovered since it stores unique patterns. This isn’t to say there isn’t redundancy in the patterns, only that loss of entire regions loses its stored patterns both up and down the hierarchy.

To represent a pattern, the idea is to store it as sufficient to match the pattern again. Sometimes it may take a dozen or a hundred different versions of the same pattern to accurately recognize it in the future.

Patom theory sits a level above the actual mechanisms of the storage method, but brain damage evidence strongly supports PT.

Patterns are hypothesized to be either sets of things (like, say, a set of pixels in a visual screen) or sequences of things (say the pixels changing when a person walks across your field of view). Sequences enable things like syllables making up spoken words, letters making up written words, words making up a sentence or a title, time passages, and so on. Snaphots are sets of things, like the specific people in a meeting, the active red pixels in a camera at a point in time, or a collection of peas on your dinner plate.

When a set or sequence is matched, it signals forward and backwards to allow its combination to be used as bottom-up matching and top-down validation.

If you ask a child, they would probably point out that their memory is independent of their senses. It is all integrated.

But more than 200 years of medical research shows brain regions specialize. That tells us not only how it functions, but suggests how AI vision could function. Rather than ML categorizing pictures, dog/not dog, our brain recognizes far more and bundles it all together in complex sets enabling human-like accuracy. Compared to ML that connect images to labels like “dog,” an improved model could access the semantic elements like “is/has/does” for improved clarity and generalization. As they say, “a picture is worth 1000 words” because sometimes, the multisensory experience is easier to understand by experience than a description of it in language.

Further Research – Criticize!

Is this model right or wrong? What’s better and why?

In science, there is always opportunity to falsify a model. The computing-brain-model fails for reasons that come from lack of learning, programming, region operation and plasticity. “The brain is a computer” doesn’t explain much and has been unsuccessful at emulating brain function. And simulation is not duplication. The competition against PT is weak or non-existent.

PT explains a lot, but not how neurons do it. There is research needed to consolidate the regions and their degree of plasticity. Where is a table summarizing brain regions that cover some of the diverse models of region distribution?

A model of human brains sets the scene to discuss how our language works and how it evolved. A quick spoiler: the fundamentals of how our brain works fits nicely with how our language works.

PT, like any scientific theory, may be wrong, but the computational model is not a replacement. Ongoing work to support and reject the model sets a roadmap to improve cognitive science.

Next Time – Human Language with PT

Patom theory explains how a brain works, based on pattern matching in a specific way.

As a combinatorial system, pattern atoms connect senses together without encoding, duplication or programming. What does the application of PT do when implementing a human language system, such as that explained by the linguistics science of Role and Reference Grammar (RRG)?

We start with a system of patterns, precisely connected in a brain to identify categories, parts and the actions they take. The use of names and a collection of historically useful concepts from the science of signs fits well.

Evolution of Human Language

What does this say about the evolution of human language? Theory supports a model in which our brain’s representation without language is extremely precise. Labelling the meaning with words enables human language in its fullest form as a small extension.

In Tom Wolfe’s book, “The Kingdom of Speech,” he recounts finding a web page titled “THE MYSTERY OF LANGUAGE EVOLUTION.” The article describes scientists trying to explain how language evolved, but their unusual summary was that they were:

“giving up, throwing in the towel, crapping out when it came to the question of where speech—language—comes from and how it works.”

Language allows us to identify objects, their parts and properties. This includes their sensory memories through Hume’s ideas. These are connected patterns which differ radically between modalities, since vision is very different to sound which is different to feel.

We are now ready to extend this model of how brains work with an old extension to our brain: how languages evolved and work. It starts with a linguistic model that is built around words in language-dependent phrases (morphosyntax) and their meaning representations (semantics) with discourse pragmatics (context).